IDC: Interactive Demands Drive the Development of Physical AI to Accelerate the Evolution of Artificial Intelligence to Embodied Intelligence

The Zhitong Finance App learned that as AI technology continues to penetrate autonomous machine entity systems such as robots and autonomous vehicles, the demand for physical interaction capabilities in the real world is becoming more and more prominent, and physical AI (Physical AI) has emerged. Its core value is to give autonomous machines the ability to “perceive, understand, and execute” in the real physical world, and is a key bridge for the evolution of artificial intelligence from virtual intelligence to embodied intelligence.

According to the report “The Era of Physical AI Dawns: Simulation First, Cloud Training to End-Side Deployment, and Efficient Implementation of Intelligent Robots” recently released by IDC, physical AI refers to models that use artificial intelligence technology to understand, reason, plan, and interact with the real world. They are usually encapsulated in autonomous machines such as robots or autonomous vehicles.

The era of physical AI is coming—new market development and impetus

The need for physical interaction drives the development of physical AI. With the spread of robots and unmanned systems in manufacturing, medical care, logistics, etc., users place higher demands on their intelligence, requiring not only recognition and understanding, but also stable perception, decision-making, and execution in a real environment. This urgent need for human-like perception, autonomous decision-making, and accurate execution capabilities in the physical world is becoming the core driving force for the development of physical AI, driving robots to become embodied intelligent robots.

The evolution of AI technology accelerates the empowerment of physical entities. From visual perception models to decision control algorithms, from large-scale pre-training models to reinforcement learning frameworks, AI is injecting stronger autonomous learning and task execution capabilities into systems such as robots and autonomous driving.

The three major challenges of physical AI and the support of the three major computing platforms

The embodied application of physical AI in autonomous intelligent devices such as robots and automobiles still faces three major technical challenges:

The generalization ability of the embodied model is insufficient: The model needs to break through the generalization limitations of the environment, tasks, and hardware in order to stably perceive and execute in complex and changing real scenarios.

Data scarcity and high cost: Training a physical model requires a large amount of high-quality, multi-modal data, but collecting real-world data is expensive and difficult to cover extreme “long-tail scenarios”.

Embedded end-side deployment is limited: End-side computing power, power consumption, and size limitations make it difficult to operate the embedded model efficiently, and it is a challenge to achieve a closed loop of real-time perception, decision making, and execution.

Cognitive training platform: Provides strong computing power support. Through multi-modal perception and complex decision training, it uniformly constructs and continuously optimizes the perception, understanding, and decision-making abilities of embodied intelligent models.

Virtual simulation platform: Based on professional visual computing resources, combines high-precision physical engines and digital twin technology to generate realistic and reproducible training data, optimize operation and navigation skills at low cost, and verify control logic through software-in-the-loop (SIL).

Real-time deployment platform: Relying on high-performance inference computing resources, the completed physical model is efficiently run on an autonomous device on the end side to achieve a closed loop of “perception - decision - execution” in real time, and the data generated at the same time feeds back the training system, forming a continuous optimization cycle.

Future Prospects: Embodied Intelligent Robots Accelerate Development

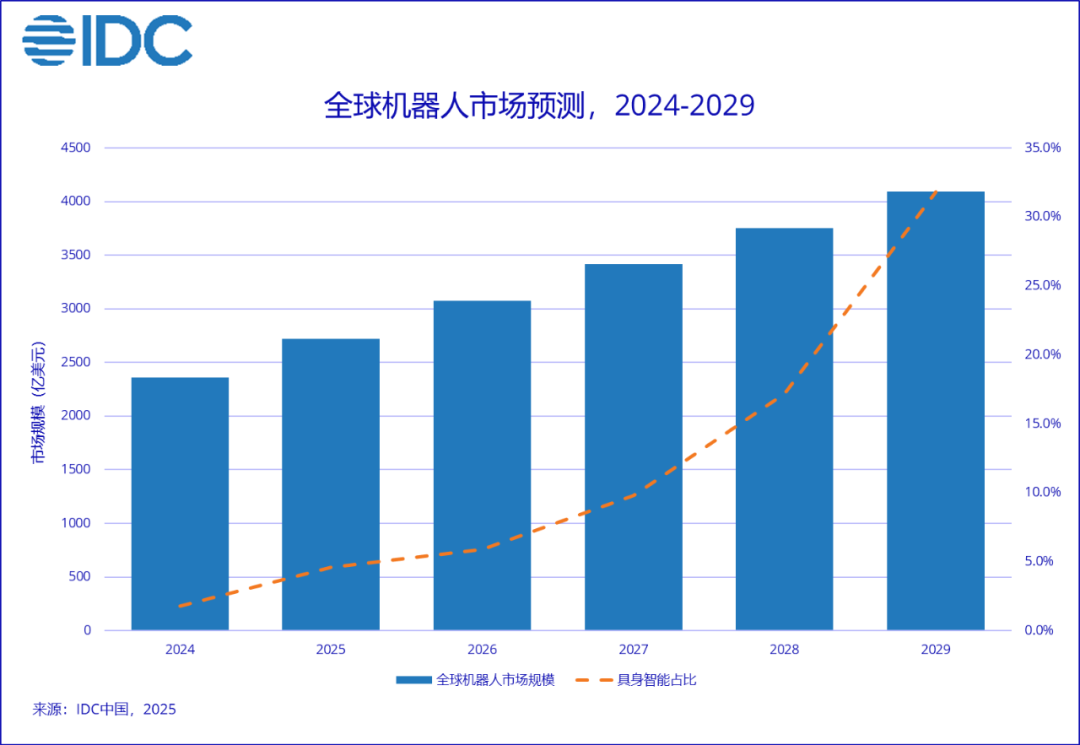

As physical AI and the three major computing platforms continue to mature, embodied intelligent robots are becoming the core direction of robot evolution in the physical AI era. Their application implementation process continues to accelerate, and the prospects are becoming more and more broad. IDC predicts that by 2029, the global robot market will exceed 400 billion US dollars, and embodied intelligent robots will become a key form, accounting for more than 30% of the market, leading the evolution of robots to a higher stage of generalization and autonomy.

Li Junlan, research manager of IDC's China Emerging Technology Research Department, said that the implementation of physical AI in the robotics field will be accelerated along the “three major computing platforms” path of “simulation first - cloud training - end side deployment”. Virtual simulation computing resources provide robots with low-cost and highly reproducible simulation data and training grounds through a complete simulation platform and world model. Cognitive training computation relies on expandable computing power resources in the cloud to accelerate generalized training of large intelligent models. At the same time, real-time deployment of computation focuses on end-side computing requirements and resource utilization efficiency, and promotes the unification of perception-execution closed-loop and data feedback in the body.